In the modern day and age, the debate Edge Computing vs Cloud Computing is more pronounced than ever before. With corporations working towards optimization of performance, minimizing latency, and efficient handling of data, the technology adopted becomes a key strategic decision. Both computing frameworks have distinct advantages, and comparing their fundamental differences is important for aligning with your organization’s objectives.

Cloud computing has been the gold standard for scalable, cost-effective data storage and application deployment for a long time. It centralised computing power in remote data centers, making it possible to access huge resources and services over the internet. However, edge computing, on the other hand, brings processing power closer to the source of data generation—be it IoT devices, sensors, or mobile users—and reduces the distance data needs to travel to be processed in real time.

This blog will look into how each of these technologies operates, their pros and cons, and most critically, how to choose which one best suits your business requirements. Whether you are a startup looking for agility or an enterprise processing big data in real-time, this comparison will enable you to make smart, future-proof tech choices.

How Does Each Model Handle Data Processing And Storage?

Edge Computing processes and stores data by moving computation nearer to where the data is created—e.g., sensors, IoT devices, or local servers. This shortens the distance data has to travel, enabling instant response and localized decision-making. It conserves bandwidth and can operate independently of centralized data centers, making it perfect for environments that require speed and reliability.

Cloud Computing, in contrast, runs and stores data in big, centralized data centers over the internet. It provides nearly unlimited scalability, which makes it a good fit for holding huge datasets, executing complicated analytics, and supporting distributed teams. Yet, it can add latency to time-critical work, depending on network speed and location from the data center.

In those applications where Real-Time Data Processing is a must—autonomous cars, smart manufacturing, or remote medicine—the edge computing tends to be ahead. However, most companies use a combination of both models to trade off responsiveness with scalability and capabilities of the cloud.

What Is The Main Difference Between Edge Computing And Cloud Computing?

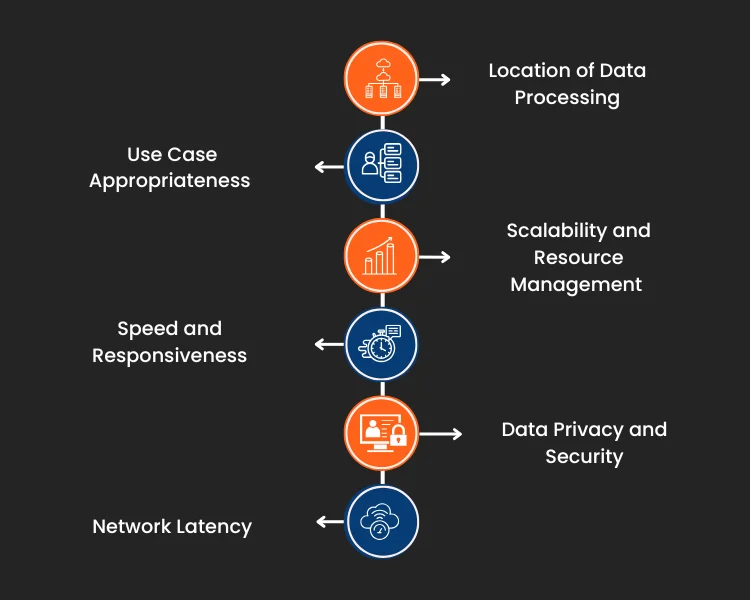

The primary difference between edge computing and cloud computing comes when data processing occurs. Also, cloud computing processes data in far-away data centers, whereas edge computing processes data near the source of the data. This fundamental difference affects speed, cost, scalability, and performance—considerations that are imperative when deciding the appropriate solution for your business application.

1. Location of Data Processing

Cloud computing processes data within centralized data centers, frequently remotely from the user or device. Edge computing, by contrast, processes locally—on devices, gateways, or nearby servers—minimizing the necessity to push data back and forth.

2. Speed and Responsiveness

Edge computing is built for low latency, perfect for programs that have to take immediate actions. The main reason is data doesn’t go very far, it allows for faster decision-making than the more centralized strategy of the cloud.

3. Network Latency

One of the most notable advantages of edge computing is lowered Network suspension. However, edge computing prevents delays that typically happen when sending data to remote cloud servers by processing data at or near the data source.

4. Scalability and Resource Management

Cloud computing is better at scalability, with almost unlimited resources on an on-demand basis. Edge computing, though scalable, generally needs more localized infrastructure planning, which can prevent instant scaling in certain situations.

5. Data Privacy and Security

Edge computing has the ability to improve data privacy by making sensitive data more local. Cloud computing, secure though it is, might require sending data across public networks, creating potential privacy and compliance issues.

6. Use Case Appropriateness

Cloud computing suits data-intensive workloads, analytics, and collaboration from remote locations. Edge computing is more suited for real-time applications such as industrial automation, remote monitoring, and smart devices that depend upon immediate data processing.

Which Is More Secure Between Edge Computing vs Cloud Computing?

Security in edge computing compared to cloud computing is largely a function of use case, deployment model, and data handling across the system. Whereas, cloud computing enjoys established, centralized security methodologies, such as advanced encryption, firewalls, and around-the-clock monitoring. Additionally,

cloud providers have specialized security personnel and compliance systems in place to secure large-scale environments, and therefore, it’s a good fit for companies valuing centralized management and simplified security administration.

Edge computing, although improving performance and lowering latency, brings a more distributed architecture that can raise the number of potential points of entry for attackers. But edge computing also has the benefit of local data isolation, minimizing exposure to internet-based threats, particularly for applications that need on-premises data protection.

The question of which is safer becomes even more complex with IoT Integration. IoT devices tend to rely on edge computing for real-time responsiveness but also increase the attack surface. Also, protecting these devices and their edge networks needs a strong approach based on authentication, endpoint security, and routine updates. In comparison, cloud infrastructures can provide more centralized control but can be slow in providing the responsiveness many IoT systems require. All in all, the best security solution is most likely to be a hybrid that takes the best of both edge and cloud computing.

What Are The Benefits Of Adopting A Hybrid Edge-Cloud Architecture?

Hybrid edge- cloud architecture unites the centralized capabilities of

cloud computing and the localized effectiveness of edge computing. Through this method, companies can process important data near the source for quicker response times while still accessing the cloud for extensive data analysis, long-term storage, and scalability across the globe. It’s especially valuable in manufacturing, healthcare, and logistics industries where real-time decision-making and big data processing need to complement each other.

One of the biggest strengths of this model is that it is flexible. A hybrid configuration allows companies to distribute workloads according to performance, latency, and security needs. Also, timely tasks can be processed at the edge, and less critical processes can be transferred to the cloud. Dynamic distribution not only maximizes the utilization of resources but also improves the overall dependability and responsiveness of systems across various environments.

With the increasing demand for data-based services and Internet of Things applications, a Cloud-Edge Hybrid approach provides a solution for the future. It provides improved operational efficiency, lower costs on bandwidth, and compliance through keeping sensitive data on-premise when required. For most enterprises, this optimum balance is the secret to being agile, secure, and scalable in a connected world.

What Do Industry Trends Suggest About Future Adoption?

Industry trends suggest that companies are increasingly gravitating toward a hybrid model, combining both edge and cloud computing to address changing needs. With technologies maturing and data volumes increasing, the emphasis is on solutions that provide speed, agility, and localized smarts—particularly in spaces such as IoT, AI, and real-time analytics. The debate is no longer simply about one model versus the other but about optimizing both to the fullest.

Conclusion

With advancing technology, the argument of Edge

Computing vs Cloud Computing is now not “which is better” but “how can they complement each other.” Both models have unique strengths—cloud computing provides scalability and centralized management, whereas edge computing provides speed, responsiveness, and localized decision-making. Selecting the proper architecture is a function of your business objectives, the type of data you are dealing with, and how quickly you must respond to that data.

For most organizations, a hybrid model is becoming the most feasible option. By judiciously mixing edge and cloud resources, Revinfotech helps companies realize the optimal blend of performance, cost, and flexibility. Whether you’re looking to enable real-time applications, drive user experiences, or future-proof your infrastructure, matching your technology strategy to your operational requirements is the key to long-term success.